Notable links: April 3, 2026

A dystopian week, with a glimmer of hope: alternatives are available.

Most Fridays, I share a handful of pieces that caught my eye at the intersection of technology, media, and society.

Did I miss something important? Send me an email to let me know.

The open web isn't dying. We're killing it

Julien Genestoux is right:

“Why did we keep outsourcing identity, distribution, and monetization to companies whose incentives were obviously misaligned with ours?

[…] It is because, collectively, we preferred the short-term consumer surplus of convenience over the long-term responsibilities of stewardship.”

We can raise the alarm about the demise of the open web all we want, but the truth is that other solutions were quicker and easier — even for many of us that held up the open web banner.

Julien’s proposal is that we should think of ourselves as netizens rather than just consumers. I actually think that this is driving a lot of the innovation in the ATproto ecosystem in particular, but also on the Fediverse. People in those spaces have intentionally moved somewhere new where they can have a credible exit, can export their data cleanly, and can feel like they’re having safer, more productive, less fascistic conversations.

But the money piece isn’t there. The running joke is that the Fediverse hates money — conversations about revenue or capitalism are very often shut down early, and people who try to fundraise are often criticized — but it’s also been an ongoing issue in ATproto land too. If people are going to build good things, they need to be able to eat and pay rent so they can keep doing it. I’d argue that, yes, you do need netizens, and I’m very excited to see a resurgence in this kind of movement across the open social web in particular. We need more netizens, and the more there are, the more likely it is that people will pay for the right kind of services.

I work for a newsroom that people often donate to out of a sense of catharsis — a gratitude that something is being done in a world where they feel powerless. I think there’s something to learn from here too. In the past, I’ve argued that highly ideological tech spaces need more product thinking so that we can more sharply identify valuable solutions to people’s problems, and there’s still truth in that — but I've learned that sometimes the product value is agency in the face of powerlessness rather than a set of features. There may well be value in leaning right in to the anti big tech angle on the open web. What might it look like to put people’s distrust of X, Google, Microsoft, et al front and center, and put a fundraising banner up like Wikipedia does?

I think we can take Julien’s point about netizens and connect it directly to the idea of citizens. People see what’s going on in the world and know that tech companies are intertwined with it. Some of them — not most of them, but a reasonable number — may want to do something about that. Not because they believe in an open web as such, or even know what that is, but because they believe in an open society. Using this kind of messaging would be overtly political in a way that tech is sometimes afraid to be, but we’ve seen similar messaging create interest in funding alternatives to US big tech in Europe, for example (and result in actual funding). I think the interest is there to move away from the tech powers-that-be globally, but engaged citizens don't always know what to concretely do about it. We can bring our message to them.

We need more netizens and citizens both, and we should be talking about this more. Rather than de-emphasizing the ideology of the open web in favor of more proximate product value, which is a thing I’ve sometimes argued for in the past, we should accept that it is a work of engaged citizenry that verges on activism. Embracing that could find us aligned people outside of our existing development circles who might be interested in broadening our impact. I’d like to see us try.

An AI company set out to fix news deserts. Instead, it copied local journalists’ work

Repeat after me: AI cannot write journalism and should never be used in place of a journalist. I believe it can be a very useful tool — but it is a tool for humans.

So this whole initiative was misconceived:

“Artificial intelligence company Nota — whose clients include organizations like The Boston Globe and the Institute for Nonprofit News — is scrapping its network of local news sites after learning that they contained dozens of instances of plagiarism. […] The 11 sites — collectively called Nota News — launched in September as an effort to bring “bilingual local reporting and civic tools to underserved communities.””

The deal here was that the company would identify news deserts: places that were unserved or underserved by real newsrooms. And then it would try to serve those areas with content created by an LLM-based system.

This was inevitably going to plagiarize existing journalism, because what other source could it possibly use? An agentic system can’t do the on-the-ground research and reporting work involved in creating a story. It can gather together data points and turn them into something that looks like news, rather than journalism: sports scores, city council votes, and that kind of thing. But it can’t provide context if someone hasn’t already written it.

As the linked Poynter article points out:

“The articles were supposed to be based on publicly available civic information, such as press releases and videos of city council meetings. In reality, Poynter found more than 70 stories dating back to October that included reporting, writing and photography from local journalists without attribution.”

Someone had already written it: human journalists whose work was subsequently incorporated without attribution. The eleven human editors who used the LLM tools to generate content apparently didn’t realize that this work had been drawn into the mix. Again: that was inevitably going to happen as the stories began to not just say what had happened but explain why.

The AI hype cycle has created a bunch of really regrettable case studies that other organizations should learn from. This is one. There are more like it, where good intentions lead to accidental plagiarism (or hallucinations). There are plenty of stories where organizations have prematurely let people go because they incorrectly think they can replace human initiative with software. And all of them come down to believing a science fiction version of what this technology does instead of the actual reality of it.

That’s understandable: the reality is shifting quickly, and the marketing machine is incredibly strong. But everyone needs to take a breath with AI and get themselves to a more nuanced understanding of what it is — and isn’t.

LinkedIn Is Illegally Searching Your Computer

This is quite a serious accusation:

“Every time any of LinkedIn’s one billion users visits linkedin.com, hidden code searches their computer for installed software, collects the results, and transmits them to LinkedIn’s servers and to third-party companies including an American-Israeli cybersecurity firm.”

This is an EU-based site, hence the reference to the location of the cybersecurity firm. The authors are quick to point out that they believe this scanning is illegal in the EU.

The claim is also partially a little bit hyperbolic. “Installed software” makes it sound like LinkedIn is scanning your whole computer. In reality, it’s checking for browser extensions. That’s a fairly common component of modern browser fingerprinting: at this point it’s fairly well-known that, because of the individual mix of extensions, fonts, etc available to a browser, this can be used to track individuals on the web without using cookies.

That’s not to say that it’s not invasive — it clearly is!

Browser extensions can cover a ton of identifying activity: they can reveal a person’s religion, sexuality, interests, political orientation, and so on. The implication is that this is specifically bad here because LinkedIn knows the identity of its logged-in users; as a result, this is information it could use to hydrate profiles of the specific, known individuals who use its site for unknown purposes. It’s a little over the top to call this espionage, as the linked site here does, but it’s an abuse of trust that is certainly worth calling out.

I was mostly interested in how this works; if LinkedIn is doing it, then others surely are too. The answer seems to be a set of JS calls that work in most Chromium-based browsers (Chrome, Edge, Arc, Dia, etc). They’re checking for over six thousand extensions that they know and care about, which all have specific “tells” that a website can check for. And then they check to see if the page has been modified by anything to catch any that weren’t on their list. They also check, cheekily, to see if you have “Do Not Track” switched on, but track you regardless (it’s just another part of the fingerprint to them). Finally, they’re gathering everything from your screen size and CPU type to your battery level.

This all does double duty: the resulting fingerprint is so detailed that they can track you and notice when you’re using a different computer or have changed your settings, but can also be used to profile you for profit.

The quickest solution is to use Firefox, which blocks these kinds of fingerprinting attacks. Zen Browser, which is based on the Firefox core, is my day-to-day browser, and I love it. But Chromium-based browsers need to do more to stop fingerprinting, and jurisdictions like the EU need to ban the practice outright.

What Digital Isolation and Censorship Evasion Look Like In Wartime Iran

I’ve worried that the internet will become a casualty of our worsening global politics. The inherent co-operation needed to let global networks talk to each other is aligned with an open world but not so much with an authoritarian one. Walled-off national internets — often called splinternets — may become more common.

While I worry about this for the US as the authoritarian screws continue to tighten, this is the concrete reality for people in Iran and 20 other countries today. As this piece in Tech Policy Press points out:

“The cybersecurity company Surfshark recorded 81 new internet restrictions in 2025 across 21 countries, pointing to evolving patterns of repression. Out of the 81 restrictions Surfshark tracked, 51 of these restrictive measures were taken in response to political situations.”

News and information needs to be shared differently in this kind of environment: the digital distribution techniques we’ve spent the last few decades learning simply don’t apply. Last year, in Building distributed media for a democratic breakdown, I wrote about how we might learn from Cuba’s El Paquete Semanal and take advantage of both sneakernets and peer-to-peer networks to overcome these kinds of blockades. In a restrictive environment, people need news and journalism more than ever.

I wrote that piece somewhat speculatively, but the reception to it in journalistic circles took me by surprise. It’s an idea and a worry that people are taking seriously. And over the last six months, in a world that has seen more conflict, more restrictions, and more attacks on free speech, there have only been reasons to take it more so.

The Right Is Using AI Content Scanners to Try to Supercharge Book Banning

This AI-powered effort is intentionally designed to create chilling effects and reduce support for vulnerable communities:

“Conservative parents’ advocacy groups have been experimenting with using commercially available artificial intelligence tools to help them flag more books they’ve deemed pornographic to be removed from public schools and libraries. Even though LLMs are notoriously error-prone, and the books in question aren’t pornographic, these groups continue to explore use cases for AI anyway.”

According to 404 Media’s reporting, the script has a list of 300 or so words that form the basis of a heuristic that applies an “appropriateness” score to each one. If the book is deemed inappropriate, the script creates an automated report designed to be attached to book challenges at the school district level.

This reminds me of DOGE itself, which used a keyword-based system to flag “woke” grants that should be defunded that led to some incredibly dumb decisions about what should go:

“Among them, for example, was a $470,000 grant to study the evolution of mint plants and how they spread across continents. As best we can tell, the project ran into trouble with Republicans on the Senate Committee on Commerce, Science and Transportation because of two specific words used in its application to the NSF: “diversify,” referring to the biodiversity of plants, and “female,” where the application noted how the project would support a young female scientist on the research team.”

These techniques are clearly inaccurate, but that’s not the point: it’s enough to cause havoc and make people second guess publishing books on certain topics. It’s the same culture of chaos that led to a school librarian being fired for (correctly) refusing to remove over a hundred LGBTQ books from the children’s to the adult section of her library. And it’s all designed to harm some of the people who need the most support.

The DOJ thinks news is contraband

This Freedom of the Press piece is highly relevant to how one might think about source materials in any kind of newsroom that accepts tips from others. It might not be obvious to outsiders, but newsrooms don’t facilitate tips directly from sources: material has to be volunteered without participation from the newsroom. Aside from generic instructions, nobody’s helping sources to do it.

A Biden-era precedent, now leapt on enthusiastically by the Trump administration, has begun to treat those materials as contraband regardless of how they were obtained. It’s also expanded that definition to include interviews with anyone who’s not approved to speak on the record. That line of thinking justified the raid on Washington Post reporter Hannah Natanson, where they took terabytes of data. Natanson had, just a month prior, published her account of being an engagement reporter whose job included receiving tips from the federal government. In more normal times, that account would not have made her a target.

It was previously found, in the aftermath of Daniel Ellsberg’s Pentagon Papers case, that newsrooms could publish information that was leaked to them. That’s a vital foundation for journalism and a free press, and therefore our ability to make informed democratic decisions. But this new precedent undermines that principle, and therefore our ability to understand the world around us. As the Freedom of the Press Foundation put it:

“The Pentagon Papers case stands for the proposition that the government cannot suppress the publication of truthful information of public concern, even when it would very much like to. The contraband theory is an attempt to achieve the suppression indirectly — by redefining journalists’ work product as something illicit that the government can confiscate.”

As they point out in the piece, legislation is in the works to rein these abuses in, and the judge in a pending court case has the opportunity to stand up for the First Amendment and a free press.

But there’s everything to play for. In the current political environment it’s not a slam dunk that our right to understand the world around us through investigative journalism will be upheld. We need it to be if we want to have any hope of holding people with power accountable.

The White House has an app now, and Trump wants you to report people to ICE on it

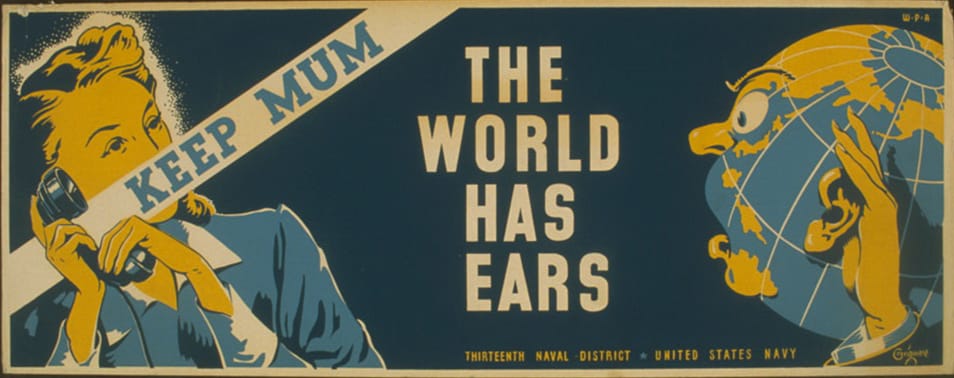

A little history lesson from the Wiener Holocaust Library:

“In Nazi Germany, some citizens passed on information about their neighbours, family, and friends to the Gestapo . This was called informing. Nazi propaganda presented the Gestapo as an omnipresent , all-seeing, all-knowing group, but in reality there was just one secret police officer for approximately every 10,000 citizens of Nazi Germany. The Gestapo were therefore reliant on a network of thousands of informants.

The information passed on by informants typically accused someone of breaking the law or of being a criminal in some way. The information provided was not always based on fact and could often be rumour or suspicion.”

Meanwhile, in completely unrelated news, The Verge reports on a new app from the White House:

“A new official White House app on Android and iOS takes the content from the White House website and copies it into app format. […] A handful of tabs in the app mostly replicate pages that exist on the Trump Administration’s version of the White House website, including news, livestreams, social feeds, and a gallery. A prominent “Get in Touch” button on the social feeds tab includes an option for users to submit a tip to ICE, which takes them to a tip form on the ICE website.”

Wow, am I glad to be living in a time where everything is normal and the historical precedents are not literally screaming at us.